Platform Access Denied: What Happened When We Tried to Sell Tinfoil Hats Online

We applied for business accounts on Meta and TikTok. Both platforms flagged us within 24 hours. Neither provided a specific reason. No ads were submitted. No content was posted. The brand name was enough. This is the documented timeline — with screenshots.

What We Sell

Before the timeline, a clarification of what triggered it. TINFOIL sells:

Hats. Structured caps, dad hats, snapbacks, beanies, trucker hats, bucket hats, booney hats. Manufactured by licensed partners in the United States. Standard headwear construction — cotton, polyester, nylon mesh — decorated with embroidery and screen printing. The same manufacturing process used by thousands of brands on every platform.

A Faraday phone pouch. The Signal Sleeve NR1 — a multi-layer conductive fabric pouch that blocks RF signals. Based on Faraday cage physics established in 1836. The same technology used in military SCIFs and hospital MRI rooms, adapted to a consumer form factor.

Digital products. A Field Manual PDF. That’s it.

No supplements. No medical devices. No health products. No ingestibles. No wearable electronics. No products that make health claims of any kind.

The brand narrative is built on a peer-reviewed MIT study, documented electromagnetic research, and the question of cognitive autonomy. The tone operates in deliberate ambiguity between satire and sincerity. We make no health claims. We make no efficacy claims. We sell hats with a point of view.

This is the product line that two of the world’s largest platforms flagged as impermissible.

The Timeline

What We Know

We don’t know specifically why we were flagged. The platforms didn’t tell us. In the absence of specific information, we can document what we observe:

The flag was pre-emptive. Neither platform reviewed content we posted or ads we submitted, because we hadn’t posted or submitted any. The flag was triggered by the application itself — likely the brand name, the website URL, or the product category. The system made a determination before encountering any actual content.

The flag was automated. The speed (under 24 hours on both platforms) and the absence of specific reasoning suggest automated content classification rather than human review. An algorithm categorized TINFOIL based on pattern matching — probably keyword association between “tinfoil,” “electromagnetic,” and content categories the platforms have flagged as problematic.

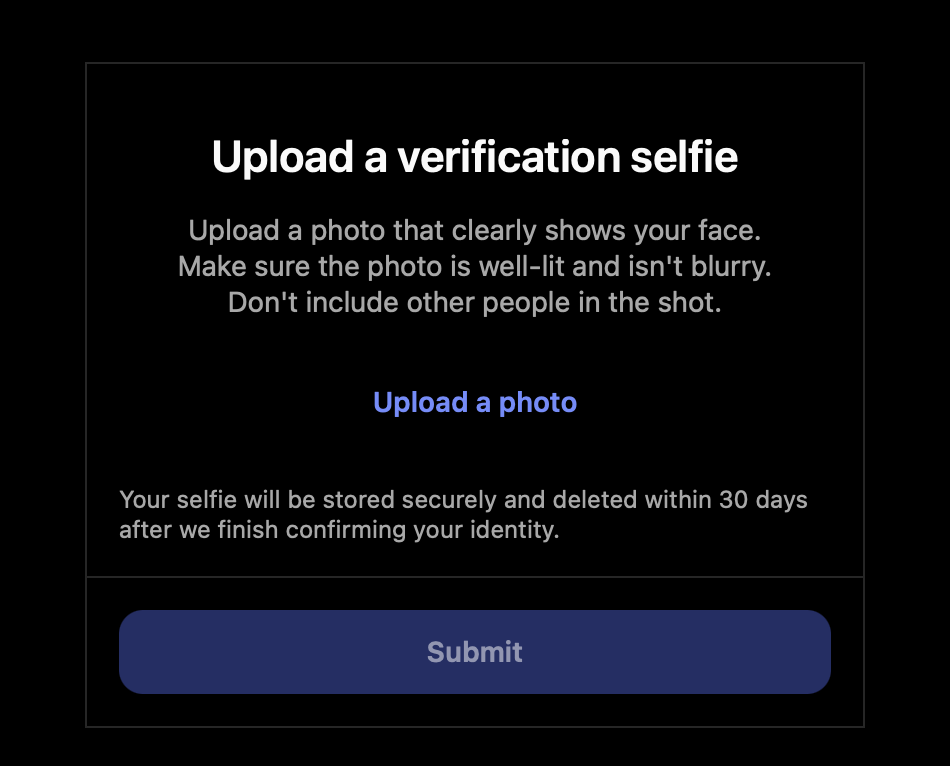

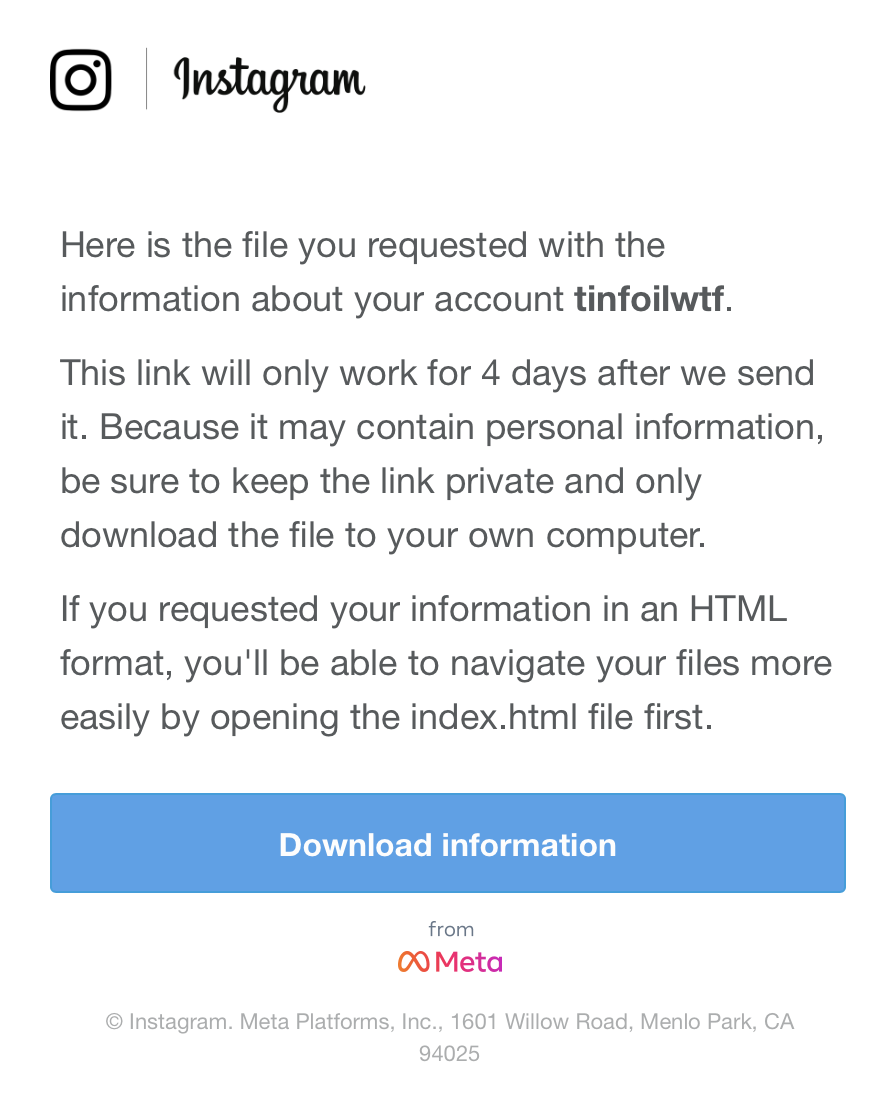

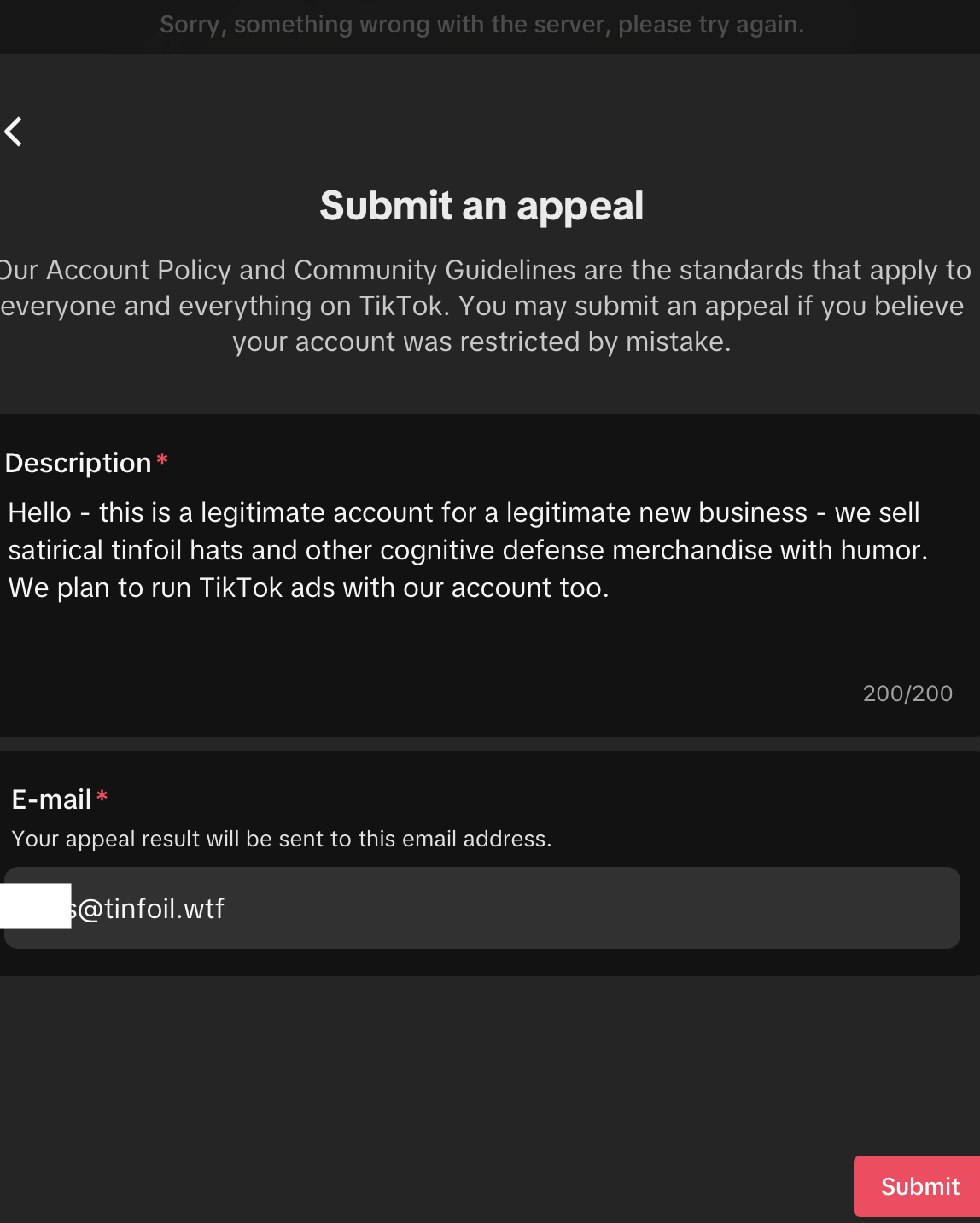

Every appeal and recovery process failed. Meta’s verification selfie — a face photo required for a business account — couldn’t be uploaded. Meta’s data download link didn’t work. TikTok’s appeal form threw server errors for four days straight. Three separate processes on two separate platforms, all non-functional. At some point, the pattern stops looking like bad luck and starts looking like a system that isn’t designed to process reversals.

The pattern is consistent. Two independent platforms, operated by different companies with different policies and different content moderation systems, reached the same conclusion about the same brand within the same timeframe. This suggests either shared classification data, shared algorithmic approaches to the relevant keywords, or a category-level policy that captures any brand associated with electromagnetic shielding regardless of actual content.

What We Don’t Claim

We are not claiming persecution. We are not claiming that Meta and TikTok are suppressing free speech. We are not claiming that our deplatforming is evidence that “they” don’t want you to know something. We are documenting what happened. The screenshots are above. The timeline is factual. The processes failed as described.

Platforms have the right to set advertising policies. They have the right to restrict accounts that violate those policies. What they don’t provide — and what we think is worth noting — is transparency about which policy was violated, specificity about what triggered the restriction, or a functional mechanism for correcting errors.

If we violated a policy, we’d like to know which one so we can evaluate whether to comply or to accept the restriction as a cost of the brand positioning we’ve chosen. We can’t make that evaluation because we haven’t been told what we did. One platform’s appeal process asks for biometric data it can’t accept. The other’s won’t let you submit the form. Neither provides human contact.

The absence of information is the story. Not the restriction itself — the silence around it.

The Algorithmic Classification Problem

The most likely explanation for our flagging is algorithmic keyword association. Platform content classification systems are designed to identify and restrict content in categories that have historically been problematic: health misinformation, conspiracy theories, fraudulent products, and political extremism. These systems operate on pattern matching — keywords, URL content, product descriptions, brand names — to classify accounts before human review.

“Tinfoil hat” is a phrase with strong associations in content moderation systems. It is culturally linked to conspiracy thinking. A brand called TINFOIL that sells headwear and references electromagnetic shielding will trigger every classifier trained on conspiracy-adjacent content — regardless of what the brand actually says or sells.

This is the same pattern recognition problem that affects the MIT tinfoil hat study itself. The study is peer-reviewed empirical research from one of the world’s most respected laboratories. It is also, by its topic, automatically categorized as humor or conspiracy by anyone who encounters the subject line without reading the paper. The cultural classification overrides the actual content.

Platform algorithms do the same thing at scale. They classify based on surface signals — keywords, associations, category patterns — because they process millions of applications and cannot evaluate each one on its merits. The system is optimized for efficiency, not accuracy. False positives (legitimate brands incorrectly flagged) are an acceptable cost when the priority is minimizing false negatives (problematic content that slips through).

We are a false positive. We think. We can’t be sure, because nobody will confirm or deny it.

What This Means for the Brand

The practical impact is that TINFOIL cannot advertise on Meta (Facebook, Instagram) or TikTok. These are the two largest social media advertising platforms in the world. For a direct-to-consumer brand that relies on digital marketing to drive traffic, this is a significant constraint.

We’ve adapted. Our content strategy is built on organic search through the Dispatches blog — 20+ research-quality posts targeting long-tail keywords that bring traffic without paid advertising. Our social presence operates on X, where the account is operational and the content is not restricted. Our email list (The Wrap) provides a direct channel that no platform can interrupt.

The constraint has, arguably, produced a better brand. The content strategy we built because we can’t run ads is generating more durable value — SEO authority, backlinks, trust, and an audience that arrived through intellectual engagement rather than impulse-click advertising. The people who find TINFOIL through a dispatch about the FCC’s outdated guidelines or relay attack prevention are better customers than the people who would have clicked a Facebook ad.

We’re not grateful for the restriction. But we’re not going to pretend it hasn’t forced us to build something more resilient than an ad-dependent business model.

The Question This Raises

TINFOIL’s experience is small and specific to us. But the pattern it illustrates is not. Automated content classification systems determine what products, brands, and ideas are permitted to reach audiences on the platforms where most commercial activity now occurs. These systems operate without transparency, without specificity, without functional appeal mechanisms, and at a scale that makes individual false positives statistically invisible.

If a hat brand can be silently removed from two platforms without explanation — and neither platform’s appeal process actually functions — the question is not “why did this happen to us?” The question is: how many other legitimate products, brands, and ideas have been silently classified out of existence by systems that optimize for efficiency over accuracy — and would anyone know?

We noticed because we’re a brand built around noticing things. Most businesses that get flagged don’t have a dispatch system to document it. They just disappear from the platforms, and the platforms’ users never know they existed.

That’s the kind of question we think is worth a hat.

Update policy: If either platform responds to our appeals — or if their forms begin accepting submissions — we will update this dispatch with the response and the outcome. If the appeals are denied with specific reasoning, we will publish the reasoning in full. If the appeals are approved, we will note that too. This dispatch is a living document. The timeline continues until it resolves — or until it becomes clear that resolution is not part of the process.

Still Operational

Two platforms said no. The hats are still for sale. The research is still published. The dispatches are still going out weekly. If a platform won’t let you see us, you know where to find us.