The Reliable Source

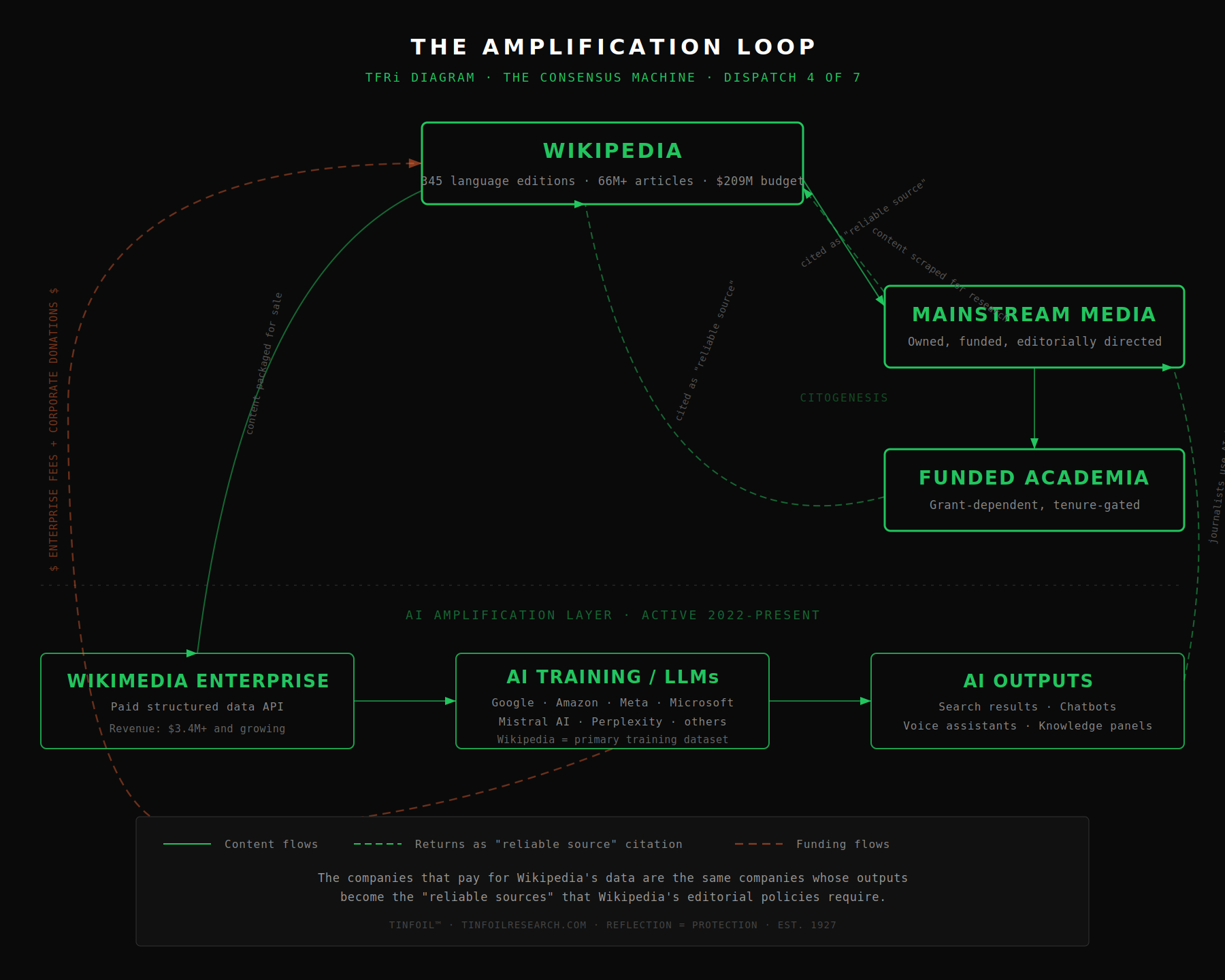

Wikipedia defines what counts as a reliable source. Mainstream institutions cite Wikipedia. Wikipedia cites mainstream institutions. The loop closes. In 2022, Wikipedia began selling structured data access to Google, Amazon, Meta, and Microsoft, the same companies whose news divisions and platforms Wikipedia’s editors cite as authoritative. In 2026, those companies are training AI systems on Wikipedia’s content. The AI outputs are becoming the next generation of sources. The loop is not closing. It has closed.

The Encyclopedia That Anyone Can Edit

Wikipedia is the largest encyclopedia in human history. The English-language edition contains more than seven million articles. The site exists in 345 active language editions, collectively containing over 66 million articles. It ranks among the ten most visited websites on Earth, drawing billions of page views per month. When you search for nearly any topic, Wikipedia is the first result. For most people, it is the last result too. They read the Wikipedia article and move on. The article becomes the fact.

The site’s subtitle is “the free encyclopedia that anyone can edit.” This is technically true and functionally misleading. Anyone can edit. Not everyone does. And the people who do are not a representative sample of humanity.

The Wikimedia Foundation’s own Community Insights surveys provide the data. According to the 2020 report, 87 percent of Wikimedia contributors globally are male. Fewer than 1 percent of Wikipedia editors in the United States identify as Black or African American, against 11.6 percent of the U.S. population. Only 1.5 percent of editors worldwide are based in Africa, a continent containing 17 percent of the world’s population. The average active contributor is in the 35-44 age range. The editor base is disproportionately concentrated in North America and Europe.

The demographic skew is not a secret. Wikipedia publishes these numbers. Jimmy Wales, the site’s co-founder, has called the gender gap “the biggest issue.” Wikimedia has run programs, set goals, and funded initiatives to address it. The 2015 target of 25 percent female editorship was not met. As of the most recent data, women constitute roughly 13 to 15 percent of contributors, depending on which survey and which language edition you consult.

But the demographic skew is only the surface problem. The structural problem is deeper. The specific consequences of this demographic composition for articles on TINFOIL’s subject matter are examined in The Entry.

The One Percent

In 2017, researchers at Purdue University published a study analyzing the 250 million edits made on Wikipedia during its first decade of existence. Their finding: approximately 1 percent of Wikipedia’s editors generated 77 percent of the site’s content.

A 2007 study by researchers at the University of Minnesota, published as a peer-reviewed paper, measured what they called “persistent word views,” the text that actually survives and is read by Wikipedia’s audience. They analyzed 25 trillion persistent word views attributable to registered users between September 2002 and October 2006. The top 10 percent of editors by edit count were credited with 86 percent of persistent word views. The top 1 percent accounted for about 70 percent. The top 0.1 percent, approximately 4,200 users, were attributed 44 percent: nearly half of Wikipedia’s visible content, produced by a group that could fit in a mid-sized concert hall.

The study’s authors concluded that elite editors “dominate what people see when they visit Wikipedia.”

This is not, by itself, a scandal. All large collaborative projects develop hierarchies. The question is not whether a small group controls most of the output. The question is what rules that small group enforces, and what those rules exclude.

The Open Door

Wikipedia’s own Protection Statistics page, as of January 2020 (the most recent published snapshot), reports that of 6,007,216 articles in the English-language encyclopedia, only 4,032 were under “pending changes protection,” the system that requires edits by new or anonymous users to be reviewed before they become visible. That is 0.067 percent. Total articles under any form of edit protection, including semi-protection, extended-confirmed protection, and full protection, numbered 15,901, or 0.26 percent.

Wikipedia states this plainly: “It is correct to say that 99.74% of Wikipedia articles are open to editing by anyone.”

On the unprotected 99.74 percent, edits go live the moment they are saved. There is no queue. There is no reviewer. There is no waiting period. The only check is whether someone, a bot, a watchlist editor, or a passing reader, notices the edit after the fact and decides to revert it.

Wikipedia’s primary anti-vandalism bot, ClueBot NG, uses machine learning to detect and revert obvious vandalism. It processes non-vandalism edits in under a second and can revert detected vandalism within approximately ten seconds. But Wikipedia’s own documentation, on a page titled “Undetected vandalism” updated in November 2024, states that ClueBot NG “catches only about 40% of all vandalism (that we know about).” The page opens with the sentence: “This should alarm you.”

The same page catalogs what happens to the other 60 percent. As of early 2025, it records the longest undetected vandalism to any article at 18 years and 76 days. To a featured article: 14 years and 113 days. To a talk page: over 20 years. The page notes that these cases are “just a few of the thousands of known instances of long-undetected vandalism” and that “because undetected vandalism is, by definition, undetected, it is impossible to know how much there is.”

The bot catches someone writing an obscenity into an article. It does not catch someone systematically removing unfavorable sourced material from a political figure’s biography over a period of years. It does not catch someone subtly reframing a neutral sentence to imply a different conclusion. It does not catch someone adding a plausible-sounding false claim that does not trigger pattern matching. The system that protects Wikipedia is optimized for the kind of vandalism that does not matter. The kind that matters is invisible to it by design.

The articles are open. The editors are not. Wikipedia co-founder Larry Sanger reported in June 2025 that he had examined 10,000 editor blocks on Wikipedia and found that 47 percent were indefinite, meaning functionally permanent. Nearly half of all disciplinary actions result in a lifetime ban. The encyclopedia that anyone can edit has permanently expelled roughly half the people it has ever disciplined. The door is open. The bouncer has a long memory.

Wikipedia’s “Undetected vandalism” page explicitly names the downstream consequences: “Wikipedia is also heavily scraped by external sources, used to train AI models, and reproduced in Google search engine summaries.” It describes a process it calls citogenesis, in which undetected false information “leaps from blog posts to news articles to books and even academia” and acquires “false legitimacy” through repetition. This is Wikipedia documenting, on its own platform, that its quality control failures propagate into the broader information ecosystem. The documentation exists. The problem persists. Wikipedia publishes no systematic, current data on average revert times for vandalism. The last formal study was conducted on data from 2004-2006. The platform that defines what counts as reliable evidence for the rest of the internet has not updated its own evidence on how reliably it polices itself in nearly two decades.

The Policy

Wikipedia’s editorial system rests on three core content policies: Neutral Point of View (WP:NPOV), Verifiability (WP:V), and No Original Research (WP:NOR). Each is carefully written. Each is internally consistent. Together, they produce a system with a specific and documentable structural effect.

The Verifiability policy states that Wikipedia’s content is “determined by published information rather than editors’ beliefs, experiences, or previously unpublished ideas or information.” Even if something is true, it cannot be added to Wikipedia unless it has been published in what the system calls a “reliable source.” The burden of demonstrating verifiability falls on the editor who adds the material.

What counts as a reliable source? Wikipedia’s own guideline, WP:RS, provides the answer. Reliable sources are those “with a reputation for fact-checking and accuracy.” In practice, this means mainstream newspapers, peer-reviewed academic journals, and established book publishers. Self-published sources, blogs, and most online-only publications are generally excluded. Primary sources, the original documents, court transcripts, declassified files, are permitted but must be handled carefully and may not be used to support interpretive claims. That requires a secondary source: a journalist, an academic, or an institution that has processed the primary source and published a conclusion.

The policy is designed to prevent misinformation. On its own terms, it is reasonable. The problem is not the intent. The problem is the geometry.

Verifiability, Not Truth

Until 2012, Wikipedia’s Verifiability policy contained the sentence: “The threshold for inclusion in Wikipedia is verifiability, not truth.” The sentence was removed after a long internal debate, but the principle it expressed was not. Wikipedia’s own essay page on the subject, still active, states: “Sometimes we know for sure that the reliable sources are in error, but we cannot find replacement sources that are correct.” It quotes Douglas Adams: “Where it is inaccurate it is at least definitively inaccurate. In cases of major discrepancy it’s always reality that’s got it wrong.”

That is Wikipedia, quoting a joke from a science fiction novel, to describe its own relationship to factual accuracy.

The policy produces specific, documented outcomes. The following cases are drawn from Wikipedia’s own records and from the reporting of the subjects involved:

The novelist Philip Roth attempted to correct Wikipedia’s claim about the inspiration for his novel The Human Stain. A Wikipedia administrator told his representative: “I understand your point that the author is the greatest authority on their own work, but we require secondary sources.” The author of a novel was not a credible source about his own novel. Roth was forced to publish an open letter in The New Yorker, which then became the “reliable secondary source” Wikipedia would accept. The truth was known. The truth-teller was rejected. The truth was accepted only after it was laundered through an institution Wikipedia already trusted.

The actress Olivia Colman attempted to correct her own birthday on Wikipedia. The day, month, and year were all wrong, making her eight years older than she actually was. No citation supported the false date. Wikipedia editors told her to “prove it.” The person whose birthday it was could not correct it without secondary source documentation of her own date of birth.

An anonymous editor added an incorrect release year, 1991, to the Wikipedia article for the Casio F-91W wristwatch. The BBC repeated it in a 2011 article. Because the BBC is a “reliable source,” the incorrect date, originating from an anonymous Wikipedia edit, became nearly impossible to remove. Communication with Casio, the company that manufactured the watch, repeatedly confirmed the correct year was 1989. But a primary source (the manufacturer) could not override a secondary source (the BBC), even when the secondary source’s information originated from Wikipedia. The error persisted for a decade. It was corrected only after a news outlet reported on the circular sourcing itself.

Wikipedia published a claim that the comedian Sinbad had died. Major news outlets reported it. His manager received condolence calls. Sinbad asserted in radio and television interviews that he was alive. Wikipedia insisted the death notice could only be reverted if confirmed by a “link-able secondary source.” The living subject of the article was not a sufficient source for the fact of his own existence.

An anonymous Wikipedia editor invented the nickname “Millville Meteor” for baseball player Mike Trout. A Newsday sports writer read it on Wikipedia and published it. The fabricated nickname, which had never existed before the anonymous edit, became real. As media critic Charles Seife observed, when false information from Wikipedia spreads to other publications, “it sometimes alters truth itself.”

Each of these cases resolved eventually. In each case, the resolution came not through the system working as designed, but through external pressure forcing the system to accommodate reality. The policy did not fail in these cases. The policy performed exactly as written. The documented outcome is the intended outcome: verifiability, not truth.

Wikipedia’s editorial system requires secondary sources to interpret primary evidence. A declassified government document proving that something happened is, under Wikipedia’s rules, insufficient on its own. What is required is that a journalist or academic say the document proves it. The journalist works for a media company with owners, advertisers, and an editorial line. The academic works in a department with funders, tenure committees, and grant dependencies. Peer-reviewed research is funded by government agencies (NIH, NSF, DARPA), foundations (Gates, Rockefeller, Sloan), and corporations. The funding determines which questions get asked, which studies are conducted, which findings get published. Media coverage is shaped by ownership, advertising relationships, and editorial policy. Wikipedia then treats the output of this funded pipeline as the only acceptable evidence for its articles. The system does not merely privilege mainstream institutions’ conclusions. It privileges the entire upstream pipeline that determines which conclusions are produced. The person who funds the research is not disclosed on the Wikipedia article. The person who owns the newspaper is not disclosed on the Wikipedia article. The incentive structure is not a conspiracy. It is an economy.

The Fringe

Alongside the reliable sources policy sits a content guideline called WP:FRINGE, which governs Wikipedia’s treatment of what it calls “fringe theories.” The guideline states that “an idea that departs significantly from the prevailing views or mainstream views in its particular field” must not be given “undue weight.” A fringe theory “must not be made to appear more notable or more widely accepted than it is.”

The guideline’s interaction with the reliable sources policy produces a specific outcome. A claim is labeled “fringe.” Because it is fringe, it is not published in mainstream reliable sources. Because it is not published in mainstream reliable sources, it cannot be included in Wikipedia, or if included, must be presented as fringe. The classification generates its own evidence.

This is not a hypothetical concern. Wikipedia’s own internal discussions, visible on the Talk pages of WP:FRINGE, document years of editor debate over this exact problem. One editor noted on the guideline’s Talk page that “if the main reason sources are rejected as unreliable is because they publish a fringe idea, there is a risk of circular reasoning.” Another observed that the guideline “discusses legitimate minority views as if they are properly regarded as fringe.” The page WP:What FRINGE is not was created specifically to address the guideline’s misapplication, noting that it is “most often abused in political and social articles where better policies such as WP:NPOV or WP:UNDUE are appropriate” and that “citing WP:FRINGE in discussions and edit summaries is often done by POV pushers in an attempt to demonize viewpoints which contradict their own.”

The Neutral Point of View policy itself produces instructive contradictions. NPOV does not mean “no criticism.” It means that the article should represent the balance of reliable sources. When the Church of Scientology’s computers were found editing Wikipedia to remove criticism, the criticism they were removing was there because mainstream reliable sources, court documents, investigative journalism, government reports, were overwhelmingly critical. The “neutral” article was already critical, because that is what the source consensus produced. The system worked as designed. The design is the point.

A system that defines truth by reference to the current institutional consensus will, by definition, reproduce every error that consensus contains, for exactly as long as the consensus contains it.

Wikipedia:Fringe theories, policy page, English Wikipedia

The Scanner

On August 14, 2007, Virgil Griffith, a graduate student in computation and neural systems at the California Institute of Technology and a visiting researcher at the Santa Fe Institute, released a tool called WikiScanner. The tool cross-referenced the IP addresses logged by Wikipedia for anonymous edits against publicly available data on which organizations owned those IP address ranges. The database contained 34,417,493 anonymous edits made between February 7, 2002 and August 4, 2007, from 2,668,095 different IP addresses.

The results were reported worldwide. Reuters reported that computers belonging to the CIA had been used to edit the Wikipedia article on the 2003 invasion of Iraq, including a chart showing casualties. CIA computers had also edited the article for former CIA director William Colby. An FBI computer had edited the article on the Guantanamo Bay detention facility. Computers from the Church of Scientology, the Democratic Congressional Campaign Committee, the Vatican, Diebold, ExxonMobil, Dow Chemical, the Australian Department of Prime Minister and Cabinet, and dozens of other organizations had been traced to edits that removed unfavorable information or added favorable language.

The Associated Press, reporting on WikiScanner, admitted that edits to Wikipedia had been made from its own computers. The New York Times, covering the same story, made the same admission.

WikiScanner could only trace anonymous edits, those made by users who were not logged in. Registered editors, who make the majority of edits and who include the high-volume editors responsible for most of Wikipedia’s visible content, were invisible to the tool. What WikiScanner documented was the floor, not the ceiling.

WikiScanner’s findings require careful framing. An edit originating from a CIA IP address does not prove that the CIA directed the edit. It proves that someone on the CIA’s network made the edit. That person could be an employee acting on instructions, an employee acting independently, or a visitor using the network. The same caveat applies to every organization WikiScanner identified. What the tool established beyond dispute is that anonymous edits to Wikipedia were routinely made from the networks of intelligence agencies, government departments, corporations, and media organizations, and that many of these edits involved removing criticism or adjusting the characterization of the editing organization’s activities. Whether these edits were authorized or freelance, they were real.

The Editor

In May 2018, news media in the United Kingdom reported on a Wikipedia account operating under the name “Philip Cross.” The account had made over 130,000 edits over 14 years. Former UK ambassador Craig Murray, one of the account’s frequent targets, published an analysis showing that the account had edited Wikipedia every single day from August 29, 2013 to May 14, 2018: 1,721 consecutive days, including five Christmas Days.

The pattern of edits was specific. The account systematically edited the Wikipedia pages of left-leaning, anti-war journalists, politicians, and media organizations, adding negative material, removing positive material, and reframing neutral material. Targets included politician George Galloway (over 1,700 edits to his page), the media analysis group Media Lens (where the account was responsible for nearly 80 percent of all content), investigative journalist Nafeez Ahmed, Edinburgh University professor Tim Hayward, and Murray himself. On the other side, the account polished the pages of figures associated with pro-interventionist positions.

The BBC’s Trending unit investigated and established that Philip Cross was a real person living in England, operating under a name he did not normally use outside of Wikipedia. George Galloway raised the matter in public. Murray published extensive analysis. An Arbitration Committee case was opened on Wikipedia in June 2018. The account was eventually given a topic restriction on certain political pages. In October 2022, the account was suspended for “disruptive editing by repeatedly trying to game or push the limits” of its topic bans.

The case illustrates the asymmetry built into the system. A single prolific editor, operating for years with a documented pattern of political bias, was able to shape the Wikipedia entries that appear first in search results for dozens of public figures. The system’s own mechanisms for accountability operated slowly and narrowly. The thousands of biased edits accumulated over 14 years were not undone. They became the article.

The Conflict of Interest

The WikiScanner revelations and the Philip Cross affair are not isolated incidents. Wikipedia’s own article on “Conflict-of-interest editing on Wikipedia” documents a sustained history of some documented cases of institutional and individual manipulation.

In 2006, United States congressional staff were found editing articles about members of Congress. In 2007, Microsoft offered a software engineer money to edit Wikipedia articles on competing code standards. In 2011, the British PR firm Bell Pottinger was found editing Wikipedia articles about its clients. In 2012, the Bureau of Investigative Journalism uncovered that UK Members of Parliament or their staff had made almost 10,000 edits to the encyclopedia, and that the Wikipedia articles of almost one in six MPs had been edited from within Parliament, many changes involving the removal of unflattering details during the 2009 expenses scandal. In 2012, Wikipedia traced 250 accounts to a firm called Wiki-PR that engaged in paid editing. In 2015, Operation Orangemoody uncovered another paid-editing scheme involving 381 accounts.

Each case was discovered, documented, and in most instances, addressed by Wikipedia’s internal mechanisms. But each case also demonstrates the same structural vulnerability: the encyclopedia that defines what counts as knowledge is editable by the people and institutions whose activities that knowledge describes. The system requires its editors to be disinterested. The system has no mechanism for ensuring that they are.

The Hidden Layer

Not all of Wikipedia is visible to the public. When an article or revision is deleted by an administrator, for policy violations, legal concerns, or other reasons, it is removed from public view but remains on the servers, accessible only to the approximately 800 administrators on English Wikipedia. A deeper level, called “oversight” or “suppression,” hides content even from most administrators, restricting visibility to a handful of users with special permissions. Wikipedia’s own documentation describes these layers.

The Philip Cross case produced a specific example. When an editor created a Wikipedia article about Philip Cross documenting the controversy, the article was deleted and the editor who created it was permanently banned from the platform. The deleted article remains on Wikipedia’s servers, visible only to administrators. The subject of thousands of documented biased edits is protected by the system. The person who documented those edits is banned from it.

The Co-Founder

Larry Sanger co-founded Wikipedia with Jimmy Wales in January 2001. He proposed the wiki format, named the project, and served as its first and only editorial employee. He departed in March 2002 after the dot-com crash eliminated his position. He has spent the twenty-three years since becoming what Vice in 2015 called “Wikipedia’s most outspoken critic.”

Sanger’s criticisms have been specific and escalating. In 2004, he identified “lack of respect for expertise” as the root problem. In 2007, he called Wikipedia “broken beyond repair.” In 2021, he stated publicly that it could no longer be trusted on politically sensitive topics. In 2025, he published a 37,000-word reform proposal. None of his proposed reforms have been adopted. His alternatives, including Citizendium, Everipedia, and the Knowledge Standards Foundation, failed to displace Wikipedia’s dominance.

The pattern is structural. The co-founder of the consensus machine says the consensus machine is broken. The consensus machine is the first thing you read about him when you search his name. The Co-Founder examines the full documented record of what happens when the most credible possible critic of the system attempts to reform it from outside.

The Budget

Wikipedia presents itself as a grassroots volunteer project. It asks readers for $2.75 through banner advertisements that warn the site may not survive without donations. The financial reality, documented in the Wikimedia Foundation’s own audited reports, is different.

In fiscal year 2024-2025, the Wikimedia Foundation reported total revenue of $208.6 million and total expenses of $190.9 million. It employs over 640 staff across nearly every time zone. Its net assets stand at approximately $255 million. The Wikimedia Endowment, a separate permanent fund established in 2016, was valued at $144 million as of June 2024. The Foundation has received clean audits from KPMG for 20 consecutive years.

The majority of revenue comes from individual donations: eight million donors in fiscal year 2023-2024, with an average donation of $10.58. But the Foundation also receives significant institutional funding. Major donors to the Foundation and its Endowment include Google (over $2 million in direct donations, plus Enterprise fees), Amazon ($1 million in multiple years), Meta/Facebook ($1 million to the Endowment), George Soros ($2 million to the Endowment), Craig Newmark Philanthropies ($3.5 million or more), Peter Baldwin and Lisbet Rausing through the Arcadia Fund ($8.5 million or more), the Rockefeller Foundation ($1 million grant), and the Alfred P. Sloan Foundation (multiple multi-million-dollar grants over many years). Corporate matching gift programs from Google, Microsoft, Apple, Goldman Sachs, and others contribute additional amounts annually.

In 2021, the Wikimedia Foundation launched Wikimedia Enterprise, a commercial product operated through a wholly-owned LLC. Enterprise sells structured, machine-readable data feeds to paying customers through high-speed APIs, providing cleaned, continuously updated Wikipedia content optimized for integration into search engines, voice assistants, AI training pipelines, and knowledge graphs. The content itself is freely licensed. What Enterprise sells is the pipe: reliability, speed, structure, and service-level agreements. As of January 2026, announced Enterprise partners include Google (the first paying customer in 2022), Amazon, Meta, Microsoft, Mistral AI, and Perplexity. Enterprise revenue was $3.4 million in fiscal year 2022-2023, with monthly revenue growing year over year.

The encyclopedia that defines what information is credible operates on a budget larger than most of the news organizations whose output it classifies as “reliable sources.” Its commercial customers include the same technology companies whose platforms and AI systems are shaped by Wikipedia’s editorial decisions. This is not hidden. It is published in audited financial statements. The question is whether the institutional scale matches the grassroots framing.

The Loop

The consensus machine does not work by suppressing information. It works by defining which information counts.

A Wikipedia article cites The New York Times. The New York Times cites Wikipedia. A peer-reviewed journal publishes a study. Wikipedia cites the study. The study’s background section cites Wikipedia. The Wikimedia Foundation has noted this dynamic in its own knowledge integrity white paper, observing that “technology platforms across the web are looking at Wikipedia as the neutral arbiter of information” while simultaneously noting “the possibility that parties with special interests will manipulate content.”

The word for this in Wikipedia’s own vocabulary is citogenesis. The term was coined by the webcomic xkcd in 2011: an unsourced claim is added to Wikipedia, a journalist reads it and includes it in an article, the article is then cited on Wikipedia as a reliable source for the claim. The information travels in a circle and emerges looking independently verified. Wikipedia acknowledges this problem. It has a template for flagging it. It publishes no data on how often the template is used. It acknowledges, in the Slate article that first documented the phenomenon at length, that incidents are “stumbled upon” rather than systematically detected, and that the platform has “no systematic way to identify each potential circular reference.” The mechanism to flag circular sourcing exists. The mechanism to measure whether the flag works does not.

The Amplification

Consider how the system handles a claim labeled as a conspiracy theory. The claim is classified as fringe under WP:FRINGE. Because it is fringe, mainstream sources do not report on it, or report on it only as an example of fringe thinking. Because mainstream sources do not treat it as credible, Wikipedia editors cannot cite evidence for it under WP:RS. The claim remains fringe on Wikipedia. Researchers studying conspiracy theories cite Wikipedia’s classification. Wikipedia cites the researchers. The loop closes.

When the claim is later confirmed, as claims about MKUltra, COINTELPRO, NSA mass surveillance, and the Gulf of Tonkin fabrication eventually were, the Wikipedia article is revised. The revision is rarely accompanied by any notation that the previous version of the article was wrong, or that the system’s classification of the claim as fringe was itself an error. The article simply updates. The old version is visible in the edit history, but no one reads the edit history. They read the article.

Now add the layer that did not exist before 2022. Wikipedia’s content is sold through Wikimedia Enterprise to Google, Amazon, Meta, Microsoft, Mistral AI, and Perplexity, among others. These companies use it to train large language models and power search results, knowledge panels, voice assistants, and chatbots. The AI systems produce outputs that journalists consult while researching stories. The journalists’ stories become the “reliable sources” that Wikipedia editors cite. The information has traveled through Wikipedia, through the paid API, through the AI training pipeline, through the AI output, through the journalist, and back into Wikipedia, each step adding a layer of apparent independent verification that does not exist.

The Wikimedia Foundation’s own “Undetected vandalism” page acknowledges the problem in the other direction: false information in Wikipedia is “heavily scraped by external sources, used to train AI models, and reproduced in Google search engine summaries,” acquiring “false legitimacy” as it propagates. The loop does not merely circulate consensus. It amplifies it. And now, for the first time, the amplification is automated.

Wikimedia Foundation, Knowledge Integrity White Paper, 2019

The Question This Dispatch Does Not Answer

This dispatch has documented the structure. The demographics. The concentration of editorial power. The policies that govern inclusion and exclusion. Some documented cases of institutional and individual manipulation. The circular sourcing. The AI amplification layer. The funding relationships. The co-founder’s sustained critique. All of these facts are drawn from Wikipedia’s own data, Wikipedia’s own policy pages, Wikipedia’s own surveys, Wikipedia’s own audited financial statements, and reporting by the mainstream sources that Wikipedia itself classifies as reliable.

The question this dispatch does not answer is: what is the alternative?

Wikipedia replaced the previous system of encyclopedic knowledge, in which a small group of credentialed editors at Britannica or World Book decided what counted. That system had its own biases, its own demographic skew, its own structural exclusions. Wikipedia’s innovation was to make the process visible. The edit histories are public. The Talk pages are public. The policy debates are public. The surveys documenting the demographic skew are public. The financial statements are public. Everything described in this dispatch is drawn from publicly available information, much of it published by Wikipedia itself.

The problem is not that Wikipedia is worse than what came before. The problem is that Wikipedia has become the infrastructure. It is the first source consulted by journalists, the default training dataset for AI systems, the authority cited by other authorities. A system designed to summarize human knowledge has become the system that defines it. And the system, by its own documented admission, is not representative of the humans whose knowledge it claims to summarize.

This dispatch does not call for Wikipedia’s replacement. It calls for something simpler, and apparently harder: reading the system’s own documentation about itself and taking it seriously.

TINFOIL dispatches use primary sources: declassified documents, congressional hearing transcripts, court filings, patent records, published academic papers, and the actual text of the studies and policies they discuss. Wikipedia’s editorial system would classify most of this as “primary source” material requiring interpretation by a “reliable secondary source” before it could support a Wikipedia claim. By Wikipedia’s own rules, the documents that prove something happened are insufficient. What is required is that a journalist or academic, funded by the institutions described above, say the documents prove it. The question of who gets to interpret evidence is not a procedural detail. It is the mechanism by which the consensus machine operates. This series interprets the primary sources directly and tells you where to find them. You can read them yourself. That is the point.

This dispatch cites 38 sources across five categories. Estimated breakdown: platform self-reporting (Wikipedia’s own policy pages, survey data, financial statements, and articles about itself) ~45%; independent journalism (Reuters, BBC Trending, Bureau of Investigative Journalism, Slate, The Washington Post, Inside Philanthropy, Five Filters) ~20%; subject’s own statements (Larry Sanger’s published writings and interviews, Craig Murray’s analysis, Philip Roth’s open letter) ~15%; academic and scholarly (Matei and Britt, Kittur et al., Zia et al., Charles Seife) ~15%; primary documents (WikiScanner database, original Wikipedia edits as primary artifacts) ~5%.

These percentages are editorial estimates, not computed metrics. A source may appear in more than one category. A dispatch examining a platform’s structural design will necessarily draw heavily from that platform’s own documentation, because the platform’s own policies, surveys, and financial disclosures are the primary evidence for how the platform operates. The relevant question is whether independent sources corroborate the factual claims. In this dispatch, all factual claims are independently verifiable through at least one non-subject source. The full source list follows.

Sources

Wikimedia Foundation, Community Insights Report, 2020. Data on editor demographics, gender ratio, geographic distribution.

Wikimedia Foundation, “Change the Stats” initiative page. Published demographic data including 87% male contributor ratio, less than 1% Black U.S. editors, 1.5% Africa-based editors.

Wikimedia Foundation, Community Engagement Insights Report, 2018. Survey of over 4,000 Wikimedia community members.

List of Wikipedias, English Wikipedia, March 2026. 345 active language editions, 361 total created.

Sorin Adam Matei and Brian Britt, Structural Differentiation in Social Media (Springer, 2017). Analysis of 250 million edits during Wikipedia’s first decade. Finding: 1% of editors produced 77% of content.

Kittur et al., “Power of the Few vs. Wisdom of the Crowd: Wikipedia and the Rise of the Bourgeoisie,” University of Minnesota, 2007. Peer-reviewed analysis of 25 trillion persistent word views.

Wikipedia, “Protection statistics” page. Data as of January 31, 2020: 99.74% of articles open to editing by anyone. 0.067% under pending changes protection.

Wikipedia, “Undetected vandalism” page, updated November 2024. Records of vandalism surviving up to 18+ years. ClueBot NG catch rate stated at approximately 40%.

Wikipedia, “Vandalism on Wikipedia” article. ClueBot NG description, Seigenthaler incident, recent examples including September 2025 Zaldy Co case.

Wikipedia, “Verifiability” policy page (WP:V). Current text as of March 2026.

Wikipedia, “Reliable sources” guideline page (WP:RS). Current text as of March 2026.

Wikipedia, “Fringe theories” guideline page (WP:FRINGE). Current text as of March 2026.

Wikipedia, “What FRINGE is not” essay page. Current text as of March 2026.

Wikipedia, “Circular reporting” article. Documented citogenesis incidents.

Randall Munroe, xkcd comic #978, November 2011. Origin of the term “citogenesis.”

Slate, “Citogenesis: the serious circular reporting problem Wikipedians are fighting,” March 2019.

Virgil Griffith, WikiScanner, August 14, 2007. Database of 34,417,493 anonymous edits.

Reuters, “CIA, FBI computers used for Wikipedia edits,” August 17, 2007.

Wikipedia, “WikiScanner” article. Wikipedia, “Conflict-of-interest editing on Wikipedia” article.

Bureau of Investigative Journalism, investigation of UK parliamentary Wikipedia editing, March 2012.

Craig Murray, “The Philip Cross Affair,” craigmurray.org.uk, May 18, 2018.

BBC Trending, investigation of Philip Cross account, May-June 2018.

Five Filters, “Philip Cross” investigation, wikipedia.fivefilters.org.

Larry Sanger, “Why Wikipedia Must Jettison Its Anti-Elitism,” Kuro5hin, December 2004.

Larry Sanger, interview, Slate, 2010.

Larry Sanger, “I Founded Wikipedia. Here’s How to Fix It,” The Free Press, October 2025.

The Washington Post, “He co-founded Wikipedia. Now he’s inspiring Elon Musk to build a rival,” October 24, 2025.

Larry Sanger, “My role in Wikipedia,” larrysanger.org.

Wikimedia Foundation, FY 2024-2025 Audit Report Highlights, published November 2025. Revenue: $208.6M. Expenses: $190.9M.

Wikimedia Foundation, Fundraising 2023-24 Report. 8 million donors, $10.58 average donation.

Wikimedia Foundation, “Wikimedia Foundation” article, English Wikipedia. Net assets, endowment valuation, major donor history.

Inside Philanthropy, “Who is funding Wikipedia and the Wikimedia Foundation?” Major donor list including Google, Amazon, Meta, Soros, Rockefeller Foundation, Sloan Foundation.

Wikimedia Enterprise, partner announcement, January 15, 2026. Google, Amazon, Meta, Microsoft, Mistral AI, Perplexity as Enterprise partners.

Zia et al., Wikimedia Foundation Knowledge Integrity White Paper, 2019.

Wikipedia, “Verifiability, not truth” essay page. Historical policy language and Douglas Adams quotation.

Philip Roth, “An Open Letter to Wikipedia,” The New Yorker, September 7, 2012.

Olivia Colman, birthday correction incident, reported by Five Blocks and multiple outlets, January 2019.

Wikipedia, “Circular reporting” article. Casio F-91W case: incorrect 1991 release year added 2009, sourced to BBC 2011 article, corrected 2019 after KSNV reported the circular sourcing.

Sinbad false death report, Wikipedia, March 2007. Reported by multiple outlets at the time.

Charles Seife, “Mike Trout and the Millville Meteor,” on Wikipedia’s capacity to alter reality through citogenesis, June 2012.

Larry Sanger, reported analysis of 10,000 Wikipedia editor blocks, June 2025. Finding: 47% of blocks were indefinite (functionally permanent).

Connected Research

This dispatch is part of the TINFOIL™ Consensus Machine series, an eight-part investigation into how institutional knowledge systems manage what counts as credible. Related dispatches:

The Label · The Oldest Trick in the Book · The Co-Founder · The Entry · The Revision · The Giggle Factor · The Science

TINFOIL™ makes cognitive defense gear for people who read the policy before trusting the encyclopedia.