The Revision

Wikipedia does not issue corrections. It does not publish retractions. It does not acknowledge that an article once said one thing and now says another. It simply changes. The old version is technically available in the edit history, but no reader is directed to it. The current version is the version. This is how a reference source handles the transition from “conspiracy theory” to “confirmed fact”: not with accountability, but with a quiet edit.

How Wikipedia Forgets

Every Wikipedia article has a revision history. Every edit is logged, timestamped, and attributed to either a username or an IP address. In theory, this means nothing is ever lost. In practice, it means everything is buried.

The revision history of a Wikipedia article is accessible through a tab at the top of every page. It is also, for any article of significant length, functionally unreadable. An article that has been edited ten thousand times presents ten thousand entries, each with a timestamp, a username, and a brief edit summary that may or may not describe the actual change. To reconstruct what the article said at any given point in time, a reader must navigate to a specific revision and compare it against another. The tools exist. The labor required to use them is prohibitive for any casual reader, which is to say, for the audience Wikipedia is designed to serve.

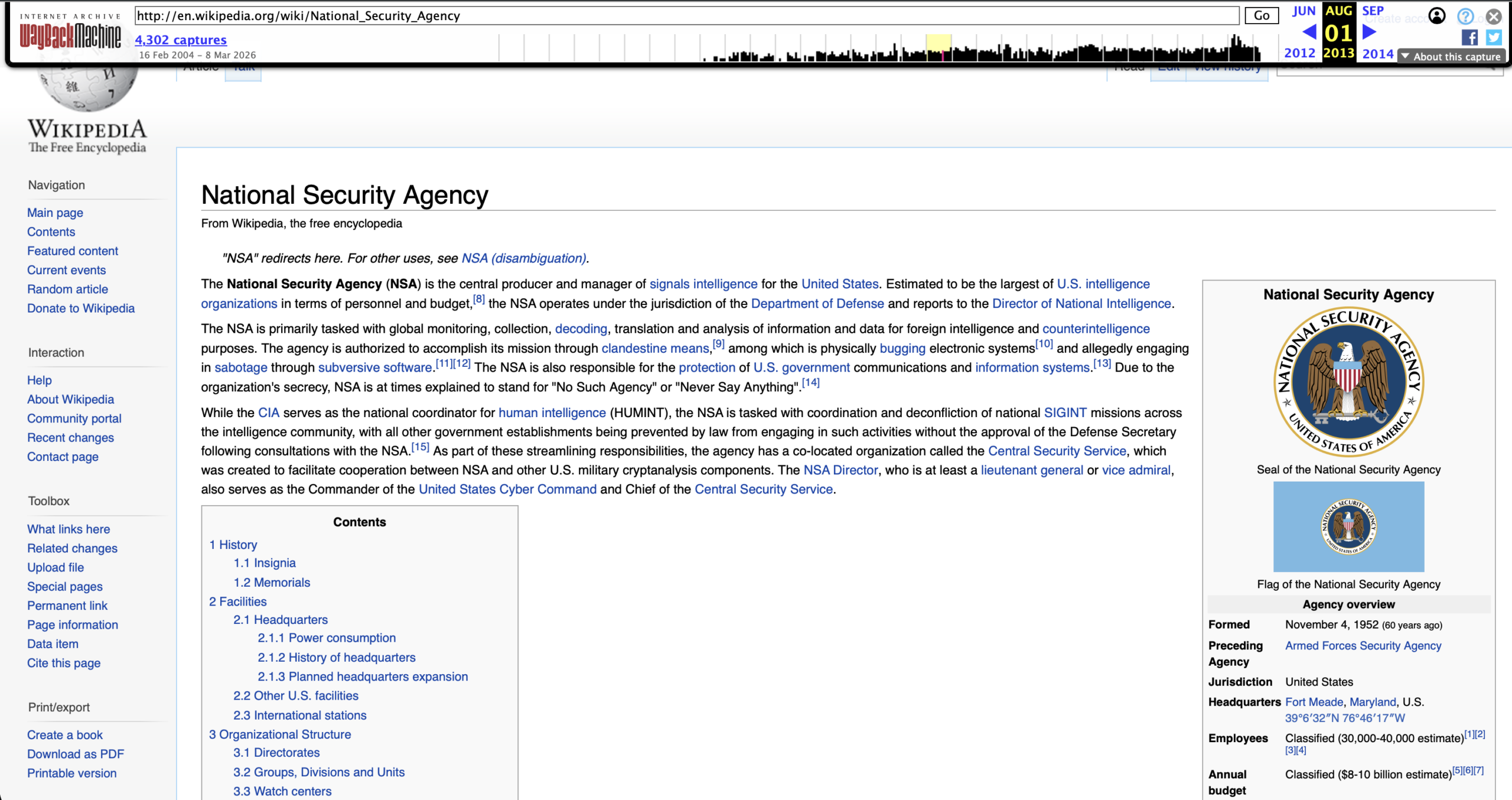

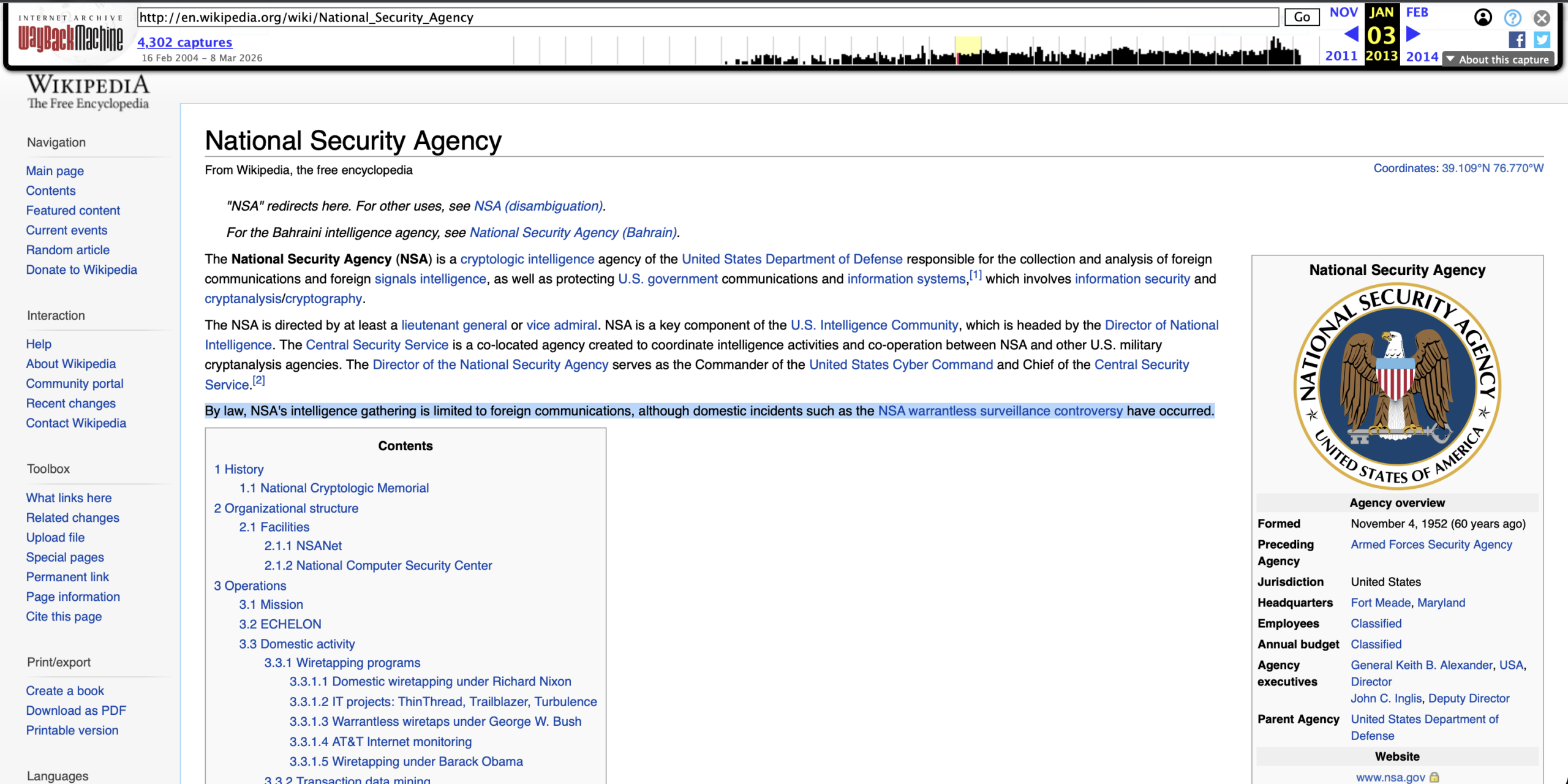

The Wayback Machine, operated by the Internet Archive, provides an alternative. It captures periodic snapshots of web pages, including Wikipedia articles, at irregular intervals. By pulling snapshots from different dates, it is possible to place an article’s past and present versions side by side. What emerges is a record of how the consensus machine manages the transition when claims it dismissed are confirmed by the institutions it trusts.

This dispatch examines that record for three cases: MKUltra, NSA mass surveillance, and the Gulf of Tonkin incident. In each case, the claim was labeled a conspiracy theory by mainstream institutions. In each case, the claim was subsequently confirmed by government documents, congressional hearings, or declassified records. In each case, Wikipedia’s article was revised to reflect the new consensus. In no case was the revision accompanied by any acknowledgment that the previous version had been wrong.

Case One: MKUltra

The facts are not in dispute. Between 1953 and 1973, the Central Intelligence Agency operated a program called MKUltra that conducted experiments on unwitting human subjects using LSD, electroshock, sensory deprivation, and other techniques aimed at developing methods of mind control. The program was authorized by CIA Director Allen Dulles. It was run by chemist Sidney Gottlieb. It involved at least 80 institutions and 185 researchers, many of whom did not know they were working for the CIA. In 1973, CIA Director Richard Helms ordered the destruction of all MKUltra files. In 1977, approximately 20,000 documents that had escaped destruction were discovered through a Freedom of Information Act request, triggering Senate hearings chaired by Senator Edward Kennedy.

Before the 1977 hearings, claims about CIA mind control experiments were treated as conspiracy theories. After the hearings, they were treated as history. The factual record did not change gradually. It changed on a specific date, when a specific set of documents was made public. The question is how the reference source that now serves as the world’s default encyclopedia handled that transition.

Wikipedia’s article on MKUltra was created on November 9, 2003. Early versions of the article were skeletal, reflecting the limited public documentation available at the time. The article grew steadily as editors added information from the declassified documents, the Senate hearing transcripts, and secondary sources. By the mid-2000s, the article was extensive.

But the editorial history reveals a pattern. In early versions, language frequently hedged: claims were “alleged,” programs were “reported,” and techniques were “said to” have been used. As the article matured, these hedges were progressively removed. The word “alleged” disappeared from descriptions of events confirmed by Senate testimony. The word “reported” was replaced with declarative statements sourced to declassified documents. The transition was not marked by any editorial note. The article simply became more certain over time, as though it had always known what it now stated as fact.

The current article opens with an unhedged declaration: MKUltra “was an illegal human experimentation program designed and undertaken by the U.S. Central Intelligence Agency (CIA).” No reader encountering this sentence today would know that earlier versions of the same article treated the same facts with significantly more caution. The article does not contain a section on how its own framing evolved. It does not note that the claims it now presents as documented history were, within living memory, dismissed as the fantasies of paranoid minds.

The Wikipedia article on MKUltra states that most records were destroyed in 1973 on the orders of CIA Director Richard Helms. It presents this as a historical fact, which it is. What it does not explore is the epistemological implication: the destruction of evidence by the very institution whose claims were being questioned. When someone alleges a conspiracy and the accused party destroys the evidence, the absence of evidence cannot logically be used to argue that the conspiracy did not occur. The MKUltra article handles this correctly by noting the destruction. What the article does not do is extend the logic to other cases where evidence has been destroyed or withheld. The destruction clause is presented as a feature of this one program, not as a pattern of institutional behavior.

Case Two: NSA Mass Surveillance

Before June 5, 2013, the claim that the National Security Agency was conducting mass surveillance of American citizens’ telephone and internet communications was treated, in mainstream discourse, as a conspiracy theory. There were earlier warnings. In 2005, The New York Times reported that the NSA had been conducting warrantless wiretapping under the Bush administration. In 2006, USA Today reported on the NSA’s collection of phone records from major telecommunications companies. In 2007, AT&T technician Mark Klein revealed the existence of Room 641A, a facility in San Francisco where the NSA had installed equipment to intercept internet backbone traffic.

Each of these revelations generated a news cycle and then faded. The structural claim, that the U.S. government was conducting mass surveillance of its own citizens at a scale previously associated with conspiracy theory, remained outside the boundary of mainstream consensus. People who made the claim were not consulted as analysts. They were categorized as alarmists.

On June 5, 2013, former NSA contractor Edward Snowden began releasing classified documents to The Guardian and The Washington Post. The documents confirmed the existence of PRISM, a program that allowed the NSA to access data from major technology companies including Google, Facebook, Apple, and Microsoft. They confirmed the bulk collection of telephone metadata from millions of Verizon subscribers under a secret court order. They confirmed a global surveillance apparatus of a scope that exceeded what most conspiracy theorists had claimed.

Wikipedia’s response was immediate and massive. New articles were created: “Global surveillance disclosures (2013-present),” “PRISM (surveillance program),” “XKeyscore.” Existing articles on the NSA, on surveillance, on Edward Snowden were expanded, restructured, and rewritten. The encyclopedia absorbed the new information with remarkable speed.

What it did not do was reconcile the new information with what the old articles had said. The Wikipedia article on the NSA, as it existed in early 2013, did not describe the agency as conducting mass surveillance of American citizens. After June 2013, it did. The transition is visible in the revision history. It is not visible in the article itself. No section of the current NSA article notes that the agency’s activities were, prior to Snowden’s disclosures, dismissed as conspiracy theory by the same institutions that Wikipedia’s reliable sources policy treats as authoritative.

Edward Snowden, as reported by The Washington Post, June 2013

In 2016, Jonathon Penney of the Oxford Internet Institute published a study in the Berkeley Technology Law Journal examining Wikipedia traffic data before and after the Snowden revelations. The study found a statistically significant decline in traffic to privacy-sensitive Wikipedia articles after June 2013, consistent with a “chilling effect” on information-seeking behavior. The study also found evidence that this decline was not temporary but represented a sustained change in the long-term traffic trend. Wikipedia, in other words, was not merely a reference source that revised itself after the surveillance was confirmed. It was also a site where the confirmed surveillance measurably changed how people used the platform. The encyclopedia documented the surveillance. The surveillance, in turn, shaped who was willing to read the encyclopedia.

Case Three: The Gulf of Tonkin

On August 2, 1964, three North Vietnamese torpedo boats engaged the USS Maddox in the Gulf of Tonkin. The Maddox was on a signals intelligence mission, collecting electronic data in waters claimed by North Vietnam while South Vietnamese forces, trained and directed by the United States, conducted raids on the North Vietnamese coast. The engagement was real. The Maddox fired first, according to a 2001 internal NSA historical study, and the torpedo boats attacked in response. One North Vietnamese sailor was killed. The Maddox sustained a single bullet hole. The Johnson administration presented the encounter as an unprovoked attack in international waters. It was neither.

Two days later, on August 4, the administration claimed it had happened again. North Vietnamese torpedo boats had attacked the Maddox and the USS Turner Joy in a second, deliberate assault. Congress responded by passing the Gulf of Tonkin Resolution on August 7, with a unanimous vote in the House and only two dissenting votes in the Senate. The resolution authorized the president to take “all necessary measures” to defend American forces in Southeast Asia. It was the legal foundation for the Vietnam War.

The second attack did not happen. No North Vietnamese vessels were present.

Doubts emerged almost immediately. Captain John Herrick, commander of the Maddox, sent a message within hours expressing uncertainty about what had occurred. Senator Wayne Morse, one of the two dissenting votes, publicly questioned the administration’s account. Over the following years, skeptics who questioned the official narrative were treated as conspiracy theorists, anti-war agitators, or both.

In 2005, the National Security Agency released a declassified study by NSA historian Robert J. Hanyok. The study concluded that NSA intelligence officers had deliberately distorted signals intelligence to support the claim that the August 4 attack had occurred. The study stated that information was presented to Johnson administration officials “in such a manner as to preclude responsible decision makers in the Johnson administration from having the complete and objective narrative of events.” Only intelligence that supported the attack narrative was forwarded. Contradicting evidence was suppressed.

In 2007, the NSA released the full 522-page official history, Spartans in Darkness: American SIGINT and the Indochina War, 1945-1975, which confirmed Hanyok’s findings. The NSA officially reversed its own verdict on the events of August 4, 1964.

Wikipedia’s article on the Gulf of Tonkin incident was created on January 21, 2004. Its early versions reflected the ambiguity in the public record at the time: the article noted doubts about the second attack but did not present a definitive conclusion. After the 2005 and 2007 declassifications, the article was progressively revised to reflect the new documentary evidence. The current article opens by stating that the second attack “did not happen” and that the NSA “deliberately skewed intelligence” to support the claim.

The transition is clean. The article went from uncertainty to certainty as the evidence became available. What the article does not document is the forty-year period during which people who stated what the article now states as fact were dismissed as conspiracy theorists. The article does not contain a section on how the false narrative was maintained. It does not name the journalists, politicians, or commentators who enforced the official account. It does not examine how skeptics were characterized before the declassification proved them right.

The article presents the confirmed truth. It does not present the history of the lie. It does not document what happened to the people who told the truth before the truth was permitted: the journalists dismissed as anti-American, the veterans labeled as disloyal, the senators who voted no and paid for it in the next election. For forty-one years, between the fabricated attack in 1964 and the NSA’s declassification in 2005, the people who were right were treated as though they were wrong. The article that now confirms they were right contains no record of what that cost them.

The Scanner

The question of who edits Wikipedia articles about the activities of intelligence agencies is not theoretical. In 2007, Virgil Griffith’s WikiScanner tool cross-referenced anonymous Wikipedia edits with public IP address databases and documented that edits to articles about CIA operations, FBI detention facilities, and other sensitive topics had been made from the networks of the agencies those articles described. The tool captured only anonymous edits. Registered editors, who produce the majority of Wikipedia’s content, were invisible to it. The full documentation of WikiScanner’s findings, including its limitations and implications, appears in The Reliable Source.

For the three cases examined in this dispatch, the implication is specific: the institutions whose activities were being described in Wikipedia articles were also editing those articles, from their own computers, during the period when those activities were still being denied.

The Pattern

Three cases. Three confirmed conspiracies. Three Wikipedia articles that transitioned from hedged language to declarative statements as the evidence became undeniable. The pattern across all three is identical.

Phase one: the claim exists in the public discourse. It is labeled a conspiracy theory. Wikipedia’s article, if it exists, treats the claim with the skepticism that its reliable sources policy demands. The article reflects the consensus of the institutions Wikipedia trusts: the claim is unsubstantiated, the claimants are unreliable, the official account is the account.

Phase two: evidence emerges. Documents are declassified. Hearings are held. Whistleblowers come forward. The evidence is specific, documented, and sourced to the very institutions Wikipedia’s policy treats as authoritative. The claim transitions from “conspiracy theory” to “confirmed.”

Phase three: the article is revised. Hedging language is removed. Declarative statements replace qualifications. New sections are added. Sources are updated. The article now reflects the new consensus.

Phase four: the revision is complete. The article reads as though it has always known what it now states. No section documents the period during which the article reflected the false consensus. No editorial note acknowledges that the previous version was wrong. No analysis examines how the editorial system that produced the wrong version might produce wrong versions of other articles currently presenting the consensus view.

The cycle has no phase five. There is no accountability mechanism. There is no review of how the editorial system performed. There is no examination of whether the reliable sources policy, which directed editors to treat the claims as conspiracy theories, bears any responsibility for the error. The system that was wrong simply updates itself and continues operating by the same rules that produced the error.

A careful reader will notice that all three cases examined in this dispatch are old. MKUltra was confirmed in 1977. The Gulf of Tonkin fabrication was declassified in 2005. NSA mass surveillance was revealed in 2013, the most recent of the three and already over a decade ago. This is not an accident. It is the temporal pipeline at work. Wikipedia does acknowledge that some claims once labeled conspiracy theories have been confirmed. But the pipeline through which a claim travels from “conspiracy theory” to “confirmed” to “safe for the encyclopedia to state flatly” is long, and it leads almost exclusively to cases that feel historical. MKUltra is taught in college courses. The Gulf of Tonkin is in textbooks. Even the Snowden revelations have been absorbed into the settled past. The pipeline admits the truth, but only after enough time has passed that the truth no longer threatens the institutions that suppressed it. The relevant question is not whether Wikipedia accurately describes conspiracies confirmed fifty years ago. It is whether the same system is accurately describing claims being labeled “conspiracy theory” right now. That question has no answer, because the system that would need to answer it is the same system that produced the fifty-year delay.

What No Article Contains

Consider what a truly self-aware reference source would include in an article about a confirmed conspiracy that it had previously described differently.

It would include a section on the history of the article itself: what the article said before confirmation, what sources it relied on, how editors handled dissenting evidence, and when and why the framing changed. It would include an analysis of which reliable sources got it right and which got it wrong, and whether the sources that got it wrong have been reevaluated as reliable. It would include a discussion of whether the editorial policies that produced the earlier framing, specifically the reliable sources policy and the fringe theories policy, need revision in light of the outcome.

No Wikipedia article contains any of this. The omission is not an oversight. It is structural. Wikipedia’s editorial policies do not provide for institutional self-examination. The reliable sources policy does not include a mechanism for downgrading sources that have been consistently wrong. The fringe theories policy does not include a mechanism for recognizing that a claim classified as fringe has been confirmed. These policies operate in one direction: they exclude claims from the consensus. They do not include a procedure for admitting that the consensus was wrong.

The result is a reference source that is always correct in the present tense. Whatever Wikipedia says now is what the reliable sources support now. What Wikipedia said before, when the reliable sources supported a different conclusion, is stored in the revision history but absent from the article. The encyclopedia presents itself as a living document, continuously updated. It does not present itself as a document with a track record, a history of errors, and a documented pattern of errors that, in every case examined here, run in the same direction: toward the institutional account and away from the claims that challenged it.

Wikipedia’s editorial system produces errors in one direction more readily than the other. It is structurally easier for the system to dismiss a true claim as fringe than to accept a fringe claim as true. This is because the reliable sources policy privileges institutional consensus, and institutional consensus, by definition, lags behind the evidence when the evidence contradicts the institutions. The asymmetry is not the product of bad faith by individual editors. It is the predictable output of a system designed to mirror institutional authority. When institutions are right, Wikipedia is right. When institutions are wrong, Wikipedia is wrong in the same way, for the same duration, and with the same confidence. The system does not merely reflect the consensus. It enforces it, and it enforces it with the authority of an encyclopedia that 1.7 billion people consult every month.

The Quiet Edit

There is a term in traditional publishing for what happens when an error is discovered and corrected: a correction, an erratum, a retraction. Each of these terms implies acknowledgment. The publication admits it was wrong. It states what was wrong. It explains what is now correct. The reader is informed that the record has changed.

Wikipedia has no equivalent. Its model is the palimpsest: the old text is scraped away and the new text is written over it. The old text is not destroyed; it exists in the revision history, available to anyone willing to do the archaeological work of finding it. But the article itself, the text that 1.7 billion monthly visitors encounter, contains no trace of what it used to say.

The problem compounds downstream. In 2021, the Wikimedia Foundation launched Wikimedia Enterprise, a paid API service providing structured access to Wikipedia content for high-volume commercial users. By 2026, its partners included Google, Amazon, Meta, Microsoft, Mistral AI, and Perplexity. The Enterprise API delivers article content plus metadata about the most recent revision: editor name, size of change, credibility scores, revert probability. What it does not deliver is the full edit history. AI systems trained on Wikipedia content, voice assistants that cite Wikipedia, search engines that display Wikipedia summaries in knowledge panels: all of these inherit the current version. The palimpsest becomes the training data. The quiet edit becomes the ground truth. The revision history, where the institutional memory of what Wikipedia used to say is stored, is not part of the data pipeline that feeds the systems through which most people now encounter encyclopedic information.

This is not a bug. It is a design principle. Wikipedia’s fifth pillar states that the encyclopedia has “no firm rules.” Its editorial model is built on the premise that continuous revision produces accuracy over time. The assumption is that the current version is always the best version, because it reflects the most recent consensus of the most active editors working with the most up-to-date sources.

The assumption is correct in cases where the consensus moves toward truth. It is catastrophically wrong in cases where the consensus is itself the problem. When the institutions that Wikipedia’s reliable sources policy trusts are the same institutions whose activities are being described in the article, and when those institutions have a documented history of denying the activities in question, the reliable sources policy does not correct for the error. It amplifies it.

MKUltra was denied by the CIA until documents made denial impossible. NSA mass surveillance was denied by the intelligence community until Snowden made denial impossible. The Gulf of Tonkin second attack was maintained as fact by the Department of Defense until declassification made maintenance impossible. In each case, Wikipedia reflected the denial while it was operative and the confirmation after it was unavoidable. In each case, the transition was accomplished through a quiet edit.

The quiet edit is not a conspiracy. It is something more durable: a system that operates exactly as designed and produces exactly this result.

The Question This Series Asks

This is the final dispatch in the Consensus Machine series. The series began with The Label, which documented how the phrase “conspiracy theory” was converted from a neutral descriptor into a mechanism of dismissal. It traced the technique through millennia of institutional history in The Oldest Trick in the Book. It documented the evidentiary record in The List, the absence of any accuracy measurement in The Percentage, the Wikipedia editorial system in The Reliable Source, the co-founder’s critique in The Co-Founder, and the specific application to TINFOIL’s subject matter in The Entry.

This dispatch completes the cycle. The label is applied. The claim is dismissed. The evidence accumulates. The claim is confirmed. The article is revised. The revision is silent. The system continues.

The question is not whether Wikipedia is useful. It is. The question is not whether Wikipedia editors act in bad faith. Most do not. The question is not whether the encyclopedia should be destroyed, replaced, or boycotted.

The question is simpler and harder. It is the question that the entire Consensus Machine series exists to surface:

If the system that determines what counts as knowledge has a documented pattern of getting specific categories of claims wrong, and if that system has no mechanism for examining its own error rate, and if 1.7 billion people consult it every month as though it were a neutral reference, then what is it?

It is a consensus machine. It produces consensus. Whether the consensus is true is a question the machine is not designed to ask.

This dispatch cites 23 sources across four categories. Estimated breakdown: primary documents (Senate hearing transcripts, declassified NSA studies, FOIA-released intelligence records, WikiScanner database, Wikipedia revision histories via Wayback Machine) ~45%; independent journalism (The Guardian, The New York Times, Reuters, Wired, TechCrunch, The Register, Vietnam magazine) ~25%; academic and scholarly (Kinzer, Penney/Berkeley Technology Law Journal) ~10%; platform self-reporting (Wikipedia’s own article on WikiScanner, Wikipedia revision histories as evidence of editorial practice, Wikimedia Enterprise API documentation and partner announcements) ~20%.

These percentages are editorial estimates, not computed metrics. A source may appear in more than one category. A dispatch built on comparing what a reference source said at different points in time will necessarily rely heavily on primary documents: the archived articles themselves, the declassified records that triggered the revisions, and the congressional testimony that confirmed the underlying claims. The relevant question is whether independent sources corroborate the factual claims. In this dispatch, all factual claims are independently verifiable through at least one non-subject source. The full source list follows.

Sources

U.S. Senate Select Committee on Intelligence, “Project MKULTRA, the CIA’s Program of Research in Behavioral Modification,” Joint Hearing Before the Select Committee on Intelligence, 95th Congress, 1st Session, August 3, 1977.

Stephen Kinzer, Poisoner in Chief: Sidney Gottlieb and the CIA Search for Mind Control (New York: Henry Holt and Company, 2019).

Edward Snowden, documents disclosed to Glenn Greenwald (The Guardian) and Barton Gellman (The Washington Post), beginning June 5, 2013.

“NSA Collecting Phone Records of Millions of Verizon Customers Daily,” The Guardian, June 5, 2013.

“NSA Prism Program Taps in to User Data of Apple, Google and Others,” The Guardian, June 6, 2013.

Jonathon W. Penney, “Chilling Effects: Online Surveillance and Wikipedia Use,” Berkeley Technology Law Journal, Vol. 31, No. 1, 2016.

Robert J. Hanyok, “Skunks, Bogies, Silent Hounds, and the Flying Fish: The Gulf of Tonkin Mystery, 2-4 August 1964,” Cryptologic Quarterly, National Security Agency, declassified and released November 30, 2005.

National Security Agency, Spartans in Darkness: American SIGINT and the Indochina War, 1945-1975, by Robert J. Hanyok, declassified 2007. NSA Center for Cryptologic History.

“New Light on Gulf of Tonkin,” The New York Times, October 31, 2005.

Carl Otis Schuster, “Case Closed: The Gulf of Tonkin Incident,” Vietnam magazine, June 2008. Republished by HistoryNet.

Virgil Griffith, WikiScanner, released August 14, 2007. Database of 34,417,493 anonymous Wikipedia edits, February 7, 2002 – August 4, 2007. Full analysis in The Reliable Source.

“See Who’s Editing Wikipedia: Diebold, the CIA, a Campaign,” Reuters, August 14, 2007.

“Votes and Edits,” Wired, August 13, 2007.

Wikipedia, “MKUltra,” revision history, created November 9, 2003. Accessed via Wayback Machine snapshots, multiple dates.

Wikipedia, “Gulf of Tonkin incident,” revision history, created January 21, 2004. Accessed via Wayback Machine snapshots, multiple dates.

Wikipedia, “NSA warrantless surveillance (2001-2007),” “PRISM (surveillance program),” “2010s global surveillance disclosures,” revision histories. Accessed via Wayback Machine snapshots, multiple dates.

Wikipedia, “WikiScanner,” article. Accessed March 2026.

Church Committee, “Final Report of the Select Committee to Study Governmental Operations with Respect to Intelligence Activities,” U.S. Senate, 94th Congress, 2nd Session, April 26, 1976.

Internet Archive / Wayback Machine (web.archive.org), periodic snapshots of Wikipedia articles cited above, various dates 2003-2026.

Wikimedia Enterprise API documentation, enterprise.wikimedia.com/api/ and enterprise.wikimedia.com/docs/. Accessed March 2026.

Wikimedia Enterprise, Meta-Wiki, meta.wikimedia.org/wiki/Wikimedia_Enterprise. Partner announcements and FAQ. Accessed March 2026.

“Wikipedia Urges AI Companies to Use Its Paid API, and Stop Scraping,” TechCrunch, November 10, 2025.

“Six More AI Outfits Sign for Wikimedia’s Fastest APIs,” The Register, January 16, 2026.

Connected Research

This dispatch is the final entry in the TINFOIL™ Consensus Machine series, an eight-part investigation into how institutional knowledge systems manage what counts as credible. The full series:

The Label · The Oldest Trick in the Book · The List · The Percentage · The Reliable Source · The Co-Founder · The Entry

Related dispatches: They Put Electrodes in His Brain · The Giggle Factor · The Science

TINFOIL™ makes cognitive defense gear for people who check the revision history.